SovereignAI: know thyself

The discussion on Sovereign AI has mainly been about the real estate of data centres and fibre lines, but it should be about data. And most of all it should be about geospatial data.

Much of the discussion on SovereignAI in Canada focuses on data centers and connectivity, and those are important, but we should not forget what those data centers will contain and the inferences they will yield. Far from being just a real estate play, SovereignAI is as much about the contents and knowledge used to power the AI as it is the location of critical infrastructure. Sovereign AI offers an opportunity to create a deeper understanding of Canada, its people, resources, and opportunities.

Over the last few months, I have been writing about the need for a country, including my country, to understand itself. In The Back Forty, I suggested that Canada’s North, while vast and largely empty of people, is also our most valuable yet least monitored asset. In the past, when space was, in and of itself, a defence against prying eyes and ill intent, we could afford to let the unforgiving North be our deterrent. Now, with technological development and a warming climate, it is our turn to look after that space that has defended us for so long. And in that article, I suggest that we use space to do that, well, satellites in space, at least.

In Deep Horizontals, I point out that the geospatial sector is obscured behind the vertical of GIS. I believe that geography is part of almost every decision we make as professionals, but the GIS sector has evolved into a siloed practice, leading many non-experts to seek an alternative to the profession. And in AI ate my GIS, I suggest that many will turn to modern AI tools as their solution. Thus, the question is: will the geospatial sector engage to build the AI maps of the future, or will it let AI companies themselves dictate the quality and appearance of these interfaces?

This line of questioning brings me to the notion of SovereignAI. If Canada is to develop an AI for Canada, a Sovereign capability, then shouldn’t that capability have an understanding of Canada’s changing human and physical geography built in? Shouldn’t we know ourselves?

(Geo)AI

In a recent presentation by Natural Resources Canada on their Planaura geospatial foundation model, I heard the presenter state very plainly that:

“A good AI model is a national asset.”

This statement is prescient. In the debate on what SovereignAI is, the fact that Canada has developed a foundation model capability is something we should be proud of and talk about. Foundation models are a critical part of the AI landscape (sorry, I couldn’t resist), but not by any means the full story. That said, the advances NRCan has made in this regard are noteworthy and valuable.

While Planaura is a wonderful example, the statement that a model can be a national asset is also critical, and again hints at an alternative way to consider the sovereignty of artificial intelligence. Our ability to parse pixels and points to understand and infer relationships is a part of knowing ourselves, and therefore, sovereign.

Changing climates are having physical effects. Melting permafrost causes infrastructure to subside and, in some cases, literally dissolve. Being able to use a foundation model to look into the infrastructural future of a community under different climate scenarios is a capability that builds both municipal and defence resiliency. To use a phrase du jour, a true “dual use capability.” While Earth observation, specifically InSAR technology, has been used to measure subsidence, applying a foundation model to this technology suggests the capability to project risk under changing climatic patterns. Given less supportive ground, which buildings, runways, roads, piers, water and power plants are at most risk?

As in so many use cases, GeoAI, or even just AI, isn’t the base technology but a layer of scale and fusion that enables easier data integration: an acceleration of today’s practices.

Data storage & distribution

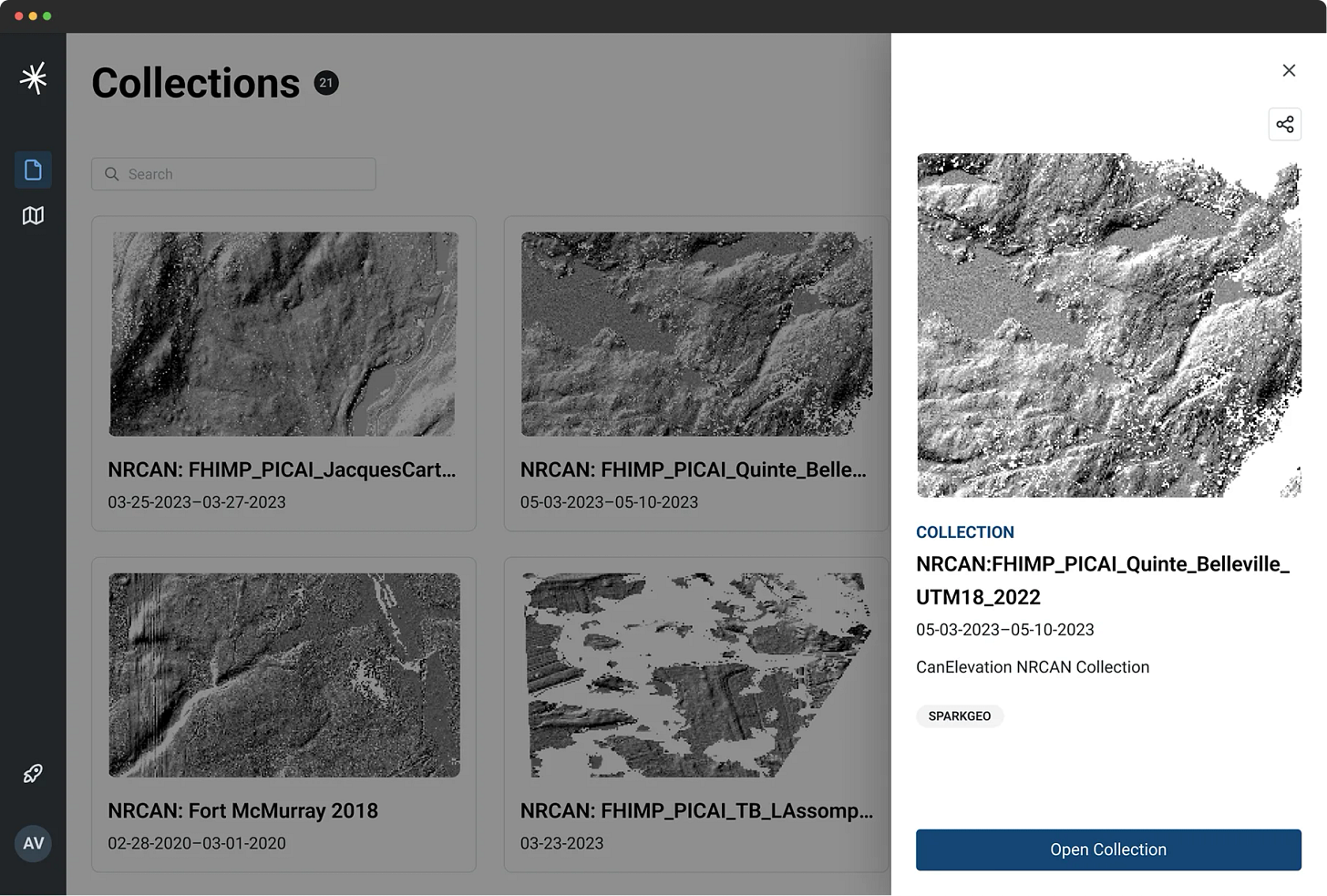

While I have suggested that the practice of GIS could be disrupted, I feel strongly that the value of authoritative geospatial data will only increase. In fact, the storage and distribution of geospatial data via secure and open APIs will allow both humans and machines to easily read data in commonly understood formats. The SpatioTemporal Asset Catalog is a great example of a commonly accepted standard. While Canada does seem to be taking this responsibility seriously, with effort going into the Digital Earth Canada product. It is still unclear how this product will operate, who will have access to it, and when it will launch. That said, Canada has extensive data archives that could be highly valuable to geospatial AI technologies, some of which are available on Geo.ca.

One key question is which companies, nations, or partners will be allowed access to that archive? In no uncertain terms, like good AI models, this data archive is a national asset and should be treated as such.

While Canada is developing a sovereign capability based on a national archive of geospatial data, this archive could also be used by others with less dignified values to develop the same capability. While I feel strongly that we know ourselves deeply, I am less comfortable with others developing a comparable familiarity.

I applaud and support the invaluable contributions of ESA, NASA, and others to the development of scientific-grade global data products: a global public good. But there is a strong argument, within the context of sovereign capabilities, that some critical national products should be kept secure. As one would with critical infrastructure. As we all do with our personal health data.

At Sparkgeo, we feel strongly that in an AI-centric future, authoritative data distribution will be a common theme. That’s why we built our geospatial data distribution stack called Prescient. It’s called prescient because structured cloud-like data distribution enables future-looking, AI-enabled applications. Without good data hygiene, we don’t get to access meaningful SovereignAI.

Geospatial, and owning the map

I tend to default to geospatial data because that is what I know best. But in the development of Sovereign capabilities, the same patterns will hold for other sizes and shapes of data. Finance, health, and sentiment data all inform a country's internal workings, shaping its management and policy.

That said, we should not forget the famously unreferenced cliche suggesting that 80% of data has a location attached to it. Given the proliferation of device-based data, ad-tech, connected vehicles, and satellite imagery, I would politely suggest that the number is now much higher (much, much, much higher). With that in mind, we need to return to the question of sovereignty and mapping. Who will write the maps of our country, and will these maps have access to authoritative data products?

We know that AI technology tends to adopt components of convenience to answer questions. In many ways, this is a very human behaviour. While we were shocked by OpenAI and friends seemingly stealing artistic IP from writers and artists, in the 2000s, Napster was doing the same thing to music. Perhaps we taught the machines to harvest data the way we would ourselves. Nevertheless, I would prefer to see our country benefit from the data we have acquired, which, in many ways, is about us. In this manner, creating AI-consumable componentry for responsible AI consumption would be a productive approach to the problem.

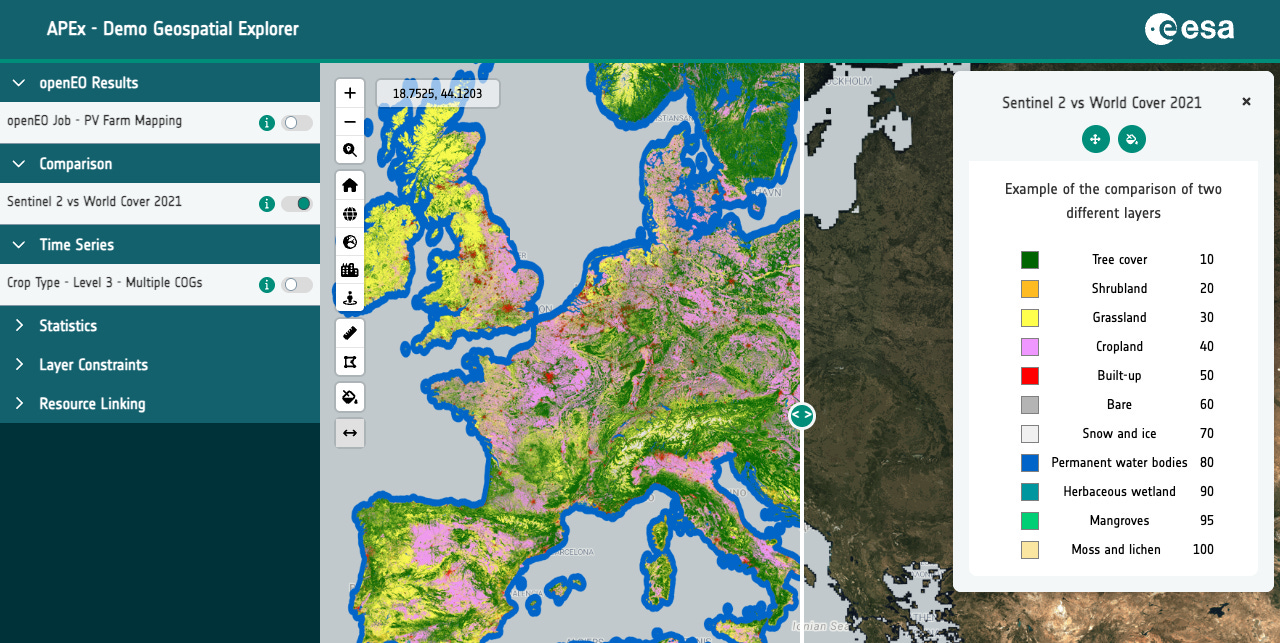

What would components look like? Well, at Sparkgeo, we have been involved in building a mapping component library for the European Space Agency called the APEx platform. This has a series of interface components that tie into data products in known, commonly understood ways. The intent here was to make scientists’ lives easier and to provide a common look and feel for ESA’s scientific output. However, this kind of structure also ensures that AIs do not make elementary geographic mistakes and that data standards are maintained.

From a geospatial community perspective, we let a web search company build the de facto consumer mapping product. Who will we let build the AI maps of the future?

ISR data fusion

If we move to the more secure discussion of surveillance platforms, we find the same problems, but on a larger scale. The US military suggests they will ultimately have a drone per infantry squad. These drones will look around corners and check over features. They are mini Intelligence, Surveillance, and Reconnaissance (ISR) platforms. But where does that data go? If the Canadian infantry is to adopt a similar approach to drones, then the sheer amount of data flow will become quite a burden. But in that data flow will be nuggets of intelligence which humans might easily miss.

This, combined with underwater vehicles, higher-altitude ISR platforms, satellites, vehicles, and people all collecting data, leads to a very confused “common operating picture.” If we throw in jamming and the fog of war, we are left with a hugely problematic software and connectivity picture. One that leaves our military in a confusing labyrinth of data sources, each with its own nuances. This is a complex mess for full-time analysts to untangle. Doing this in the field, under extreme pressure, in a mixed connectivity environment and hostile climate, is impossible. The common operating picture is one problem to solve; another is how that data is subsequently reviewed for after-action activity. Not only is the data to be visualized, but it has to be stored with extreme security.

In a similar problem set to that presented in the monitoring of vast spaces, the decentralized capture of secure data will lead to a massive intelligence-gathering nightmare. Thousands of drones will capture high-resolution, geospatial video in circumstances that will encourage review for intelligence purposes. We can solve the technical questions, but who will be able to watch all the videos? In the end, we know it will have to be machines directing algorithms to look for signs of enemy activity, IEDs, or even search-and-rescue targets.

The problem of data fusion is at the heart of the Earth observation product problem, and the dirty little secret is that not all geographic data lines up. In fact, most data does not align without some additional work; it is likely that GeoAI will play a major role in aligning georeferenced features through space and time for after-action analysis in a digital twin of an operation.

The biggest problems in Earth observation today are all in software. To access robust Geo or SovereignAI, we will need to address some of these complexities of scale.

Training data

As we think about AI and Sovereignty, we should also consider how data becomes AI. And how (and where) we build training data sets. I suppose this is somewhat back to the original case of real estate. But this basic labelling activity is still essential, and much of it is still done offshore. This potential hole in our Sovereign AI strategy can be solved by bringing back some of those technology jobs to Canada, especially around more sensitive subjects. There will be a cost, but there is also a tremendous benefit in shifting technology jobs to lower-cost environments (outside Canada’s big cities, where housing is still affordable) and even to more rural settings. Ultimately, this activity can proliferate technology jobs across the country, reducing barriers to entry and opening the door to a broader tech economy. Rural Canada, while famous for hewing wood and carrying water, could also be a decentralized network of data creators. I say this as someone who lives in Northern British Columbia, with robust, redundant connectivity data could flow faster than any natural resource.

Decentralization for resilience

The idea of distributing the capture of training data to more rural settings is predicated on having a robust connectivity strategy for Canada. At every level of economic and social development, this is absolutely paramount. Access to connectivity is access to knowledge, and it is clearly a point of equity for Canadian citizens.

The added benefit of robust national connectivity is the opportunity to decentralize computing resources, enabling the internet to function as it was designed: a network of decentralized computing hubs. With decentralization comes resilience and, by extension, independence.

Procurement as enabler, not barrier

This is a note on SovereignAI, and while applicable to any country, it’s certainly presented through a Canadian lens. With that in mind, investing in Canadian companies to build the Sovereign AI, or the Sovereign GeoAI, would be logical (if not somewhat self-serving of me to say). There has been a trend towards the Maple-washing of other nations’ technology companies, with their regional hubs in Toronto or Ottawa, trying to look and sound Canadian. I am sure wonderful people work in these establishments, but where are their owners and shareholders? Where are their executives? Whose software are they selling or reselling?

Companies like mine, Sparkgeo, have, for years (since 2010, for me), been forced to work for commercial entities or for foreign governments, beaten out of the Canadian Government market by less-qualified but better-funded competitors. While we’ve worked for ESA, UK Space, and various Saudi Arabian institutions, we found that the Canadian Government procurement system could hardly have been better designed to prevent small Canadian technology companies from engaging. I believe companies should be competitive, and working for commercial companies for fifteen years has taught us how to deliver extreme value under high pressure. So, when I see how the Canadian Government is generally treated by the traditional cadre of service providers, I am disappointed.

However, I am excited to see that teams like Sparkgeo might now have an opportunity to engage, and I look forward to demonstrating how a small team of experts (rather than contractors) can raise the expectations of a nation.