Fruit Salad

Specific observations on segmenting the geospatial market.

As discussed in the last post, we can approach segmentation from:

The resource-based view -> What can you (your company/org) do, and what are you good at?

The market-based view -> What does the market need?

In this discussion, I wanted to look at how I see the geospatial market. In this analysis, you will likely see the bias I have. You will also see my blind spots, and you may well spot some logical fallacies, too! Again, we all have different and unique perspectives on the same landscape. Sometimes, we see something because we want to, not because it is there.

Your disagreement is helpful to this body of knowledge, so please feel free to share it with me. Hopefully, I challenge your point of view a little. Together, we can take some time to consider and learn. Open minds learn more.

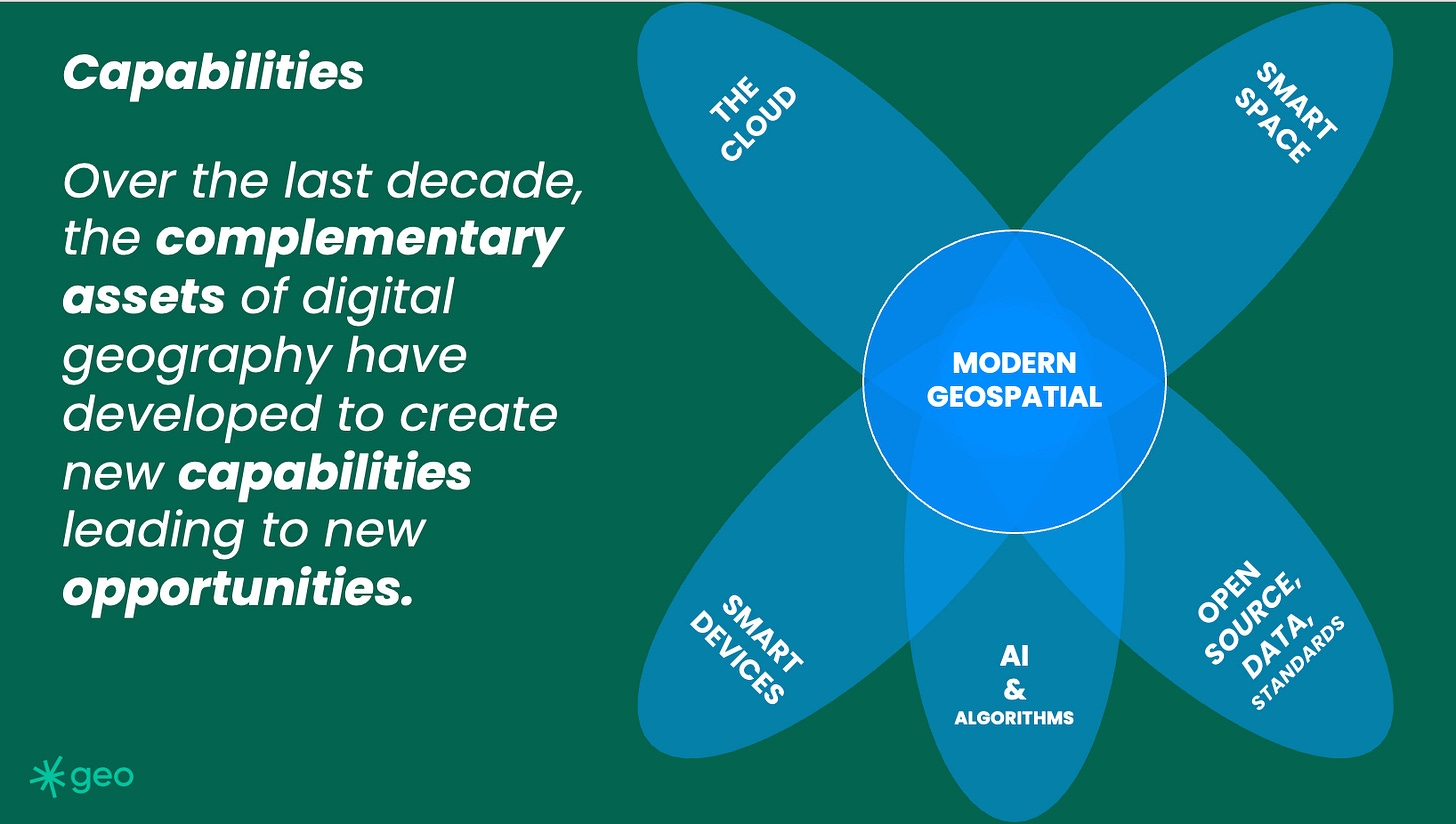

As I have stated numerous times, the geospatial community of practice has changed drastically in the last decade. However, is this change less obvious in some prominent corners of our industry? But clearly, the development and convergence of a series of complementary assets has resulted in a series of net new capabilities.

By this, I mean we can do things we could not do before. Indeed, those things we told our friends were possible a decade ago, perhaps are now. But still, that is terribly wishy-washy. So, what actually can we do now?

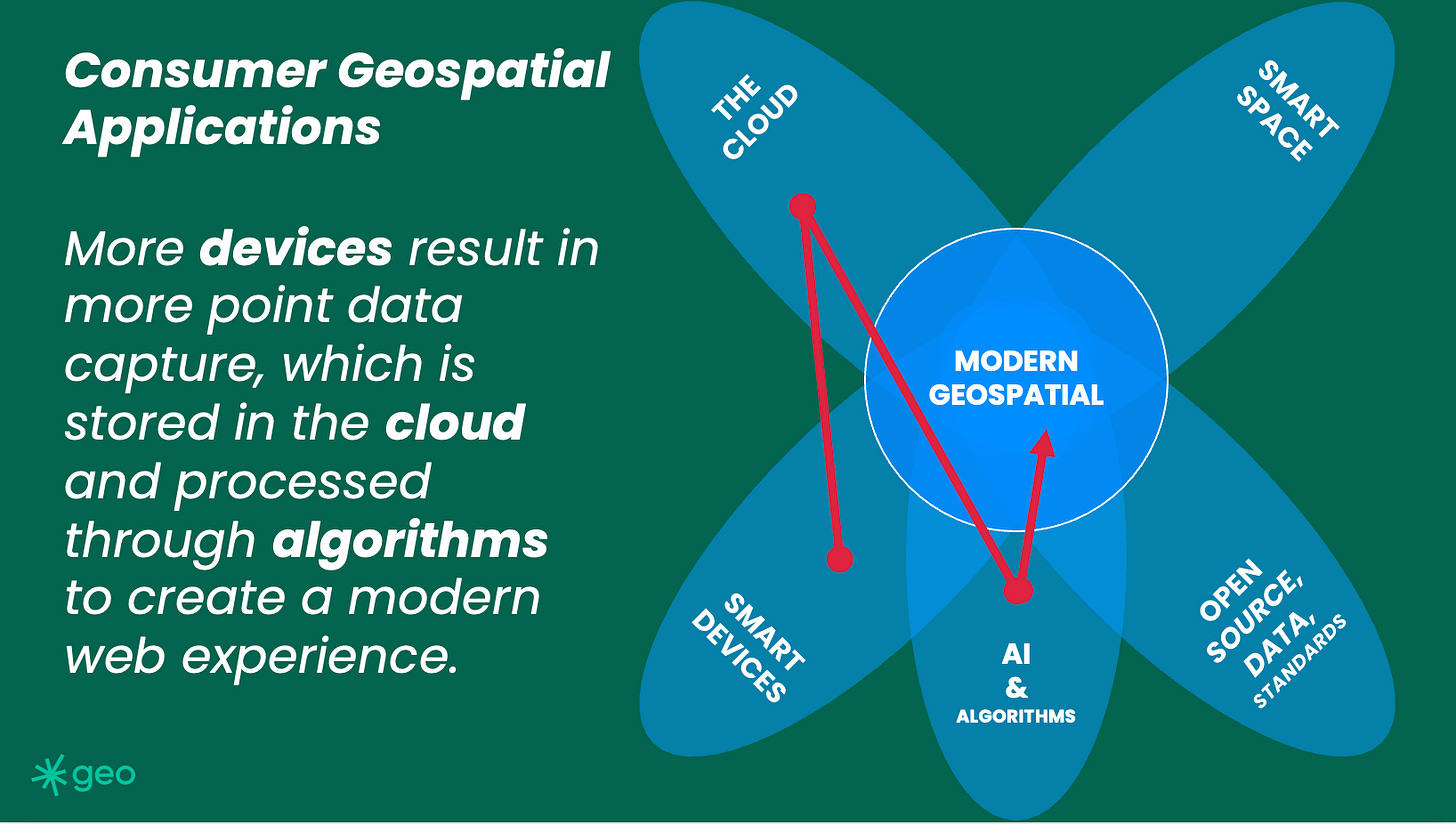

Scale is real.

We can do a lot on the cloud now. Critically, this means we can design systems cheaply and then deploy them to run at scale (expensively?) This simple sentence belays the sheer amount of opportunity that the cloud offers. Now, any compute barriers are effectively removed. Of course, there are still complexity barriers. Difficult things are still difficult. But any data weight or compute effort barriers are now vastly reduced. This has resulted in advances in large language models, which are really a massive brute force training exercise.

There was a time when storing vast amounts of data was a significant barrier to geospatial applications. This is not the case now. Interestingly, I still hear about geospatial data being called big data. It was once, it's not now.

Data pipelines are real.

We can create computing chains of events which automatically transform data into insight. This could be driven by any number of data sources, spatial or otherwise, but having sensory networks detect and deliver insight to numerous users is an actual capability. Think wildfire alerts or delivery proximity notifications.

Now, remember I am talking about systems which operate for thousands or millions of users, not more rudimentary GIS-based systems which might be designed for a handful of workers in low-web traffic environments.

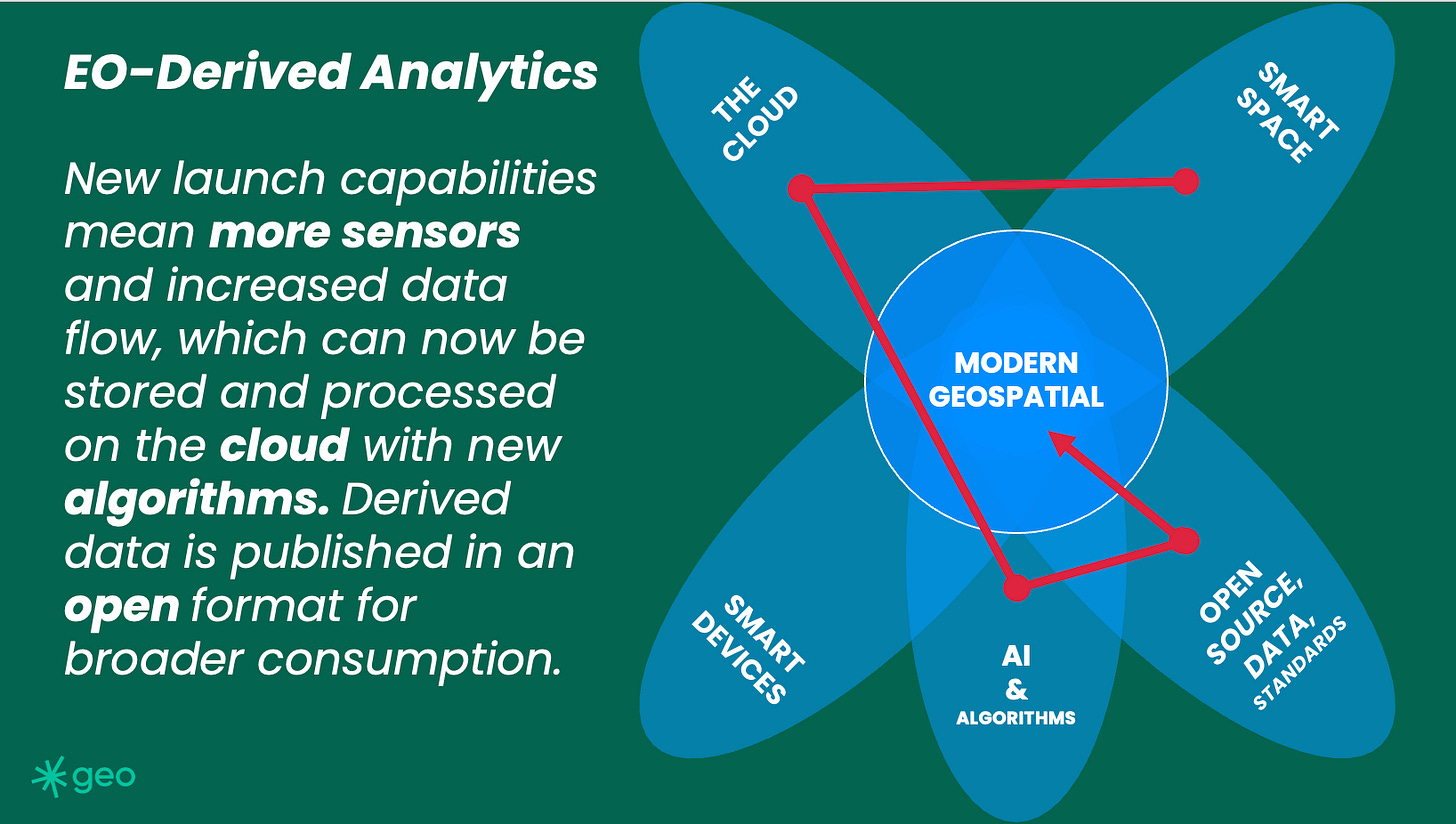

When considering how these systems might interact with people, I think about consumer geospatial applications. The Holy Grail or North Star of any EO company or cloud-centric geospatial organization should be to get pixels or pixel-derived data into the hands of everyday, non-expert people. That broad adoption will drive a resurgence in the faltering EO economy.

The querying of massive data lakes is now possible.

As discussed, there are gazillions of satellites in the sky right now. This constellation of sensory capacity downlinks TBs of data every day, indeed, every hour. The net result is a massive amount of data being stored "somewhere." Which is entirely useless without the ability to search, find or read it. However, now we can do this. There is technology to catalogue and search vast troves of imagery, vector, and IoT data. Spatio-Temporal Asset Catalogues immediately come to mind.

Machine Learning is real.

The sheer amount of captured data is fantastic, but what can we do with it? Unfortunately, we are still training our machines. But with that training comes power. And I would argue that training machines is a great application for the lonely pixels which get captured but are never looked at.

Captured by a CCD in the sky, but Never looked at by a human eye?

Limited or focused analytics are now real.

General AI is not real (As of November 8th, 2023). Which, for now, as we shake out the ethics, is probably a good thing. But limited analytics are reasonable and very possible. We still have difficulties when we apply earth observation analytics between geographies. For example, car counting (The “where is the nearest Starbucks” of EO) in Utah is a different algorithm from car counting in Mumbai.

However, these algorithms could be run in parallel on different imagery sources. If someone was so inclined, they probably could count all the cars on our planet. But, clearly, that's not useful. Or, at least, I have not thought up a good enough business reason to spend the astronomical amount of money on EO data necessary to run this algorithm. But yes, the capability now exists. Yay for us.

I could go on. I started this article a few months ago, then found myself distracted. Since then, wars have started, new LLMs have been announced, companies have started, and others have faltered. The point is that as observers of our community, our context constantly changes; in that change, different opportunities ebb and flow.

Keep watching and keep commenting.